Difference between revisions of "Main Page"

(Using purge template, because we apparently have one.) |

|||

| (6 intermediate revisions by 2 users not shown) | |||

| Line 1: | Line 1: | ||

| − | __NOTOC__ | + | __NOTOC__{{DISPLAYTITLE:explain xkcd}} |

| − | {{DISPLAYTITLE:explain xkcd}} | ||

| − | |||

<center> | <center> | ||

| − | < | + | <font size=5px>''Welcome to the '''explain [[xkcd]]''' wiki!''</font> |

| − | |||

| − | |||

| − | |||

| − | ( | + | We have collaboratively explained [[:Category:Comics|'''{{#expr:{{PAGESINCAT:Comics}}-9}}''' xkcd comics]], |

| + | <!-- Note: the -9 in the calculation above is to discount subcategories (there are 8 of them as of 2013-02-27), | ||

| + | as well as [[List of all comics]], which is obviously not a comic page. --> | ||

| + | and only {{#expr:{{LATESTCOMIC}}-({{PAGESINCAT:Comics}}-9)}} | ||

| + | ({{#expr: ({{LATESTCOMIC}}-({{PAGESINCAT:Comics}}-9)) / ({{PAGESINCAT:Comics}}-9) * 100 round 0}}%) | ||

| + | remain. '''[[Help:How to add a new comic explanation|Add yours]]''' while there's a chance! | ||

</center> | </center> | ||

| − | |||

== Latest comic == | == Latest comic == | ||

| − | |||

<div style="border:1px solid grey; background:#eee; padding:1em;"> | <div style="border:1px solid grey; background:#eee; padding:1em;"> | ||

<span style="float:right;">[[{{LATESTCOMIC}}|'''Go to this comic explanation''']]</span> | <span style="float:right;">[[{{LATESTCOMIC}}|'''Go to this comic explanation''']]</span> | ||

| Line 28: | Line 26: | ||

* If you're new to wikis like this, take a look at these help pages describing [[mw:Help:Navigation|how to navigate]] the wiki, and [[mw:Help:Editing pages|how to edit]] pages. | * If you're new to wikis like this, take a look at these help pages describing [[mw:Help:Navigation|how to navigate]] the wiki, and [[mw:Help:Editing pages|how to edit]] pages. | ||

| − | * Discussion about various parts of the wiki is going on at [[Explain XKCD:Community portal]]. | + | * Discussion about various parts of the wiki is going on at [[Explain XKCD:Community portal]]. Share your 2¢! |

| − | * [[List of all comics]] contains a complete table of all xkcd comics so far and the corresponding explanations. The red links ([[like this]]) are missing explanations. Feel free to help out by creating them! | + | * [[List of all comics]] contains a complete table of all xkcd comics so far and the corresponding explanations. The red links ([[like this]]) are missing explanations. Feel free to help out by creating them! [[Help:How to add a new comic explanation|Here's how]]. |

== Rules == | == Rules == | ||

| − | Don't be a jerk. | + | Don't be a jerk. There are a lot of comics that don't have set in stone explanations; feel free to put multiple interpretations in the wiki page for each comic. |

If you want to talk about a specific comic, use its discussion page. | If you want to talk about a specific comic, use its discussion page. | ||

| − | Please only submit material directly related to | + | Please only submit material directly related to —and helping everyone better understand— xkcd... and of course ''only'' submit material that can legally be posted (and freely edited.) Off-topic or other inappropriate content is subject to removal or modification at admin discretion, and users who repeatedly post such content will be blocked. |

If you need assistance from an admin, feel free to leave a message on their personal discussion page. The list of admins is [[Special:ListUsers/sysop|here]]. | If you need assistance from an admin, feel free to leave a message on their personal discussion page. The list of admins is [[Special:ListUsers/sysop|here]]. | ||

| − | |||

| − | |||

| − | |||

| − | |||

[[Category:Root category]] | [[Category:Root category]] | ||

Revision as of 05:35, 4 March 2013

Welcome to the explain xkcd wiki!

We have collaboratively explained 6 xkcd comics, and only 2914 (48567%) remain. Add yours while there's a chance!

Latest comic

Explanation

| |

This explanation may be incomplete or incorrect: Created by a GUIDED MISSILE THAT MISSED THE TARGET DUE TO COORDINATE DRIFT - Please change this comment when editing this page. Do NOT delete this tag too soon. |

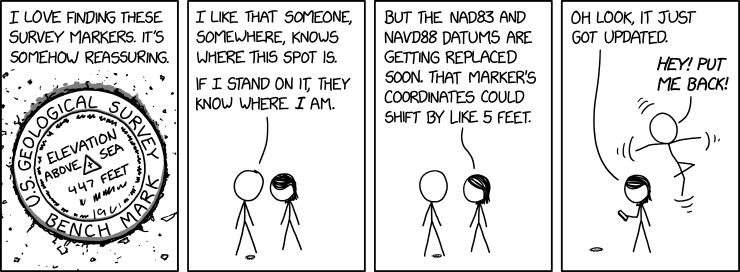

Cueball and Megan have found a survey marker on the ground. Survey markers such as these are used as reference points for the NAD 83 and NAVD 88 geodetic reference systems, and the U.S. National Geodetic Survey has a database storing the coordinates of these markers. However, those two systems are being replaced by the New Datums of 2022 (delayed to 2024-2025), which is primarily based on satellite systems and gravimetric models.

When they update the database, in the comic Cueball's position changes to compensate. In reality, updating a database to change the coordinates of a location would not physically move items at the location. Arguably, if they did, no-one would much notice, since everything surrounding them should similarly move simultaneously to its corrected position as well.

The title text refers to NAD 83 being around 7 feet off. This is in reference to both WGS84 and presumably the New Datum.

Absurd outcomes from differing survey standards was also the topic of 2888: US Survey Foot.

Transcript

- [Zoomed in view of a round marker on the ground, with small specks of dirt around it. There is one line of text going around the central part in the outer rim of the marker, with the first three words written around the top, and the last two words written around the bottom (thus not text that are going all the way around in one single line). Inside this rim there are more text on three lines. In the center there is a small cross in a triangle pointing up in relation to the central text. There are more unreadable text below the last line of text and around the inner part of the rim. And off panel voice, which in the next panel turns out to be Cueball, is written above the mark.]

- U.S. Geological survey bench mark

- Elevation above sea 447 feet

- Cueball (off-panel): I love finding these survey markers. It's somehow reassuring.

- [Cueball and Megan are show as they look down on the marker. Cueball has one leg on either side of the marker and Megan stands to the right.]

- Cueball: I like that someone, somewhere, knows where this spot is.

- Cueball: If I stand on it, they know where I am.

- [Cueball and Megan looks up at each other.]

- Megan: But the NAD83 and NAVD88 datums are getting replaced soon. That marker's coordinates could shift by like 5 feet.

- [Megan is looking down at her phone in her hand, standing in the same relation to the marker. But Cueball is now floating in the air behind her 5 feet above the ground, while flailing with his arms and legs (as shown with three small curved lines at the end of either arm and above and below him.]

- Megan: Oh look, it just got updated.

- Cueball: Hey! Put me back!

Is this out of date? .

New here?

You can read a brief introduction about this wiki at explain xkcd. Feel free to sign up for an account and contribute to the wiki! We need explanations for comics, characters, themes, memes and everything in between. If it is referenced in an xkcd web comic, it should be here.

- If you're new to wikis like this, take a look at these help pages describing how to navigate the wiki, and how to edit pages.

- Discussion about various parts of the wiki is going on at Explain XKCD:Community portal. Share your 2¢!

- List of all comics contains a complete table of all xkcd comics so far and the corresponding explanations. The red links (like this) are missing explanations. Feel free to help out by creating them! Here's how.

Rules

Don't be a jerk. There are a lot of comics that don't have set in stone explanations; feel free to put multiple interpretations in the wiki page for each comic.

If you want to talk about a specific comic, use its discussion page.

Please only submit material directly related to —and helping everyone better understand— xkcd... and of course only submit material that can legally be posted (and freely edited.) Off-topic or other inappropriate content is subject to removal or modification at admin discretion, and users who repeatedly post such content will be blocked.

If you need assistance from an admin, feel free to leave a message on their personal discussion page. The list of admins is here.