Difference between revisions of "2059: Modified Bayes' Theorem"

TobyBartels (talk | contribs) (Everything's better with transfinite ordinal numbers!) |

(→Explanation: Expanded the mathematical explanation) |

||

| Line 29: | Line 29: | ||

The title text suggests that an additional term should be added for the probability that the Modified Bayes Theorem is correct. But that's ''this'' equation, so it would make the formula self-referential, unless we call the result the Modified Modified Bayes Theorem (or Modified<sup>2</sup>). It could also result in an infinite regress -- we'd need another term for the probability that the version with the probability added is correct, and another term for that version, and so on. If the modifications have a limit, then we can make that the Modified<sup>ω</sup> Bayes Theorem, but then we need another term for whether we did ''that'' correctly, leading to the Modified<sup>ω+1</sup> Bayes Theorem, and so on through every {{w|ordinal number}}. It's also unclear what the point of using an equation we're not sure of is (although sometimes we can: {{w|Newton's Laws}} are not as correct as Einstein's {{w|Theory of Relativity}} but they're a reasonable approximation in most circumstances. Alternatively, ask any student taking a difficult exam with a formula sheet.). | The title text suggests that an additional term should be added for the probability that the Modified Bayes Theorem is correct. But that's ''this'' equation, so it would make the formula self-referential, unless we call the result the Modified Modified Bayes Theorem (or Modified<sup>2</sup>). It could also result in an infinite regress -- we'd need another term for the probability that the version with the probability added is correct, and another term for that version, and so on. If the modifications have a limit, then we can make that the Modified<sup>ω</sup> Bayes Theorem, but then we need another term for whether we did ''that'' correctly, leading to the Modified<sup>ω+1</sup> Bayes Theorem, and so on through every {{w|ordinal number}}. It's also unclear what the point of using an equation we're not sure of is (although sometimes we can: {{w|Newton's Laws}} are not as correct as Einstein's {{w|Theory of Relativity}} but they're a reasonable approximation in most circumstances. Alternatively, ask any student taking a difficult exam with a formula sheet.). | ||

| + | |||

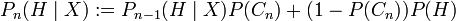

| + | If we denote the probability that the Modified<sup>n</sup> Bayes' Theorem is correct by <math>P(C_n)</math>, then one way to define this sequence of modified Bayes' theorems is by the rule <math>P_n(H \mid X) := P_{n-1}(H \mid X) P(C_n) + (1-P(C_n))P(H)</math> | ||

| + | |||

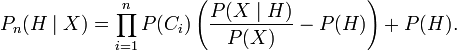

| + | One can then show by induction that <math>P_n(H \mid X) = \prod_{i=1}^n P(C_i)\left(\frac{P(X \mid H)}{P(X)} - P(H) \right) + P(H).</math> | ||

==Transcript== | ==Transcript== | ||

Revision as of 18:15, 16 October 2018

| Modified Bayes' Theorem |

Title text: Don't forget to add another term for "probability that the Modified Bayes' Theorem is correct." |

Explanation

| |

This explanation may be incomplete or incorrect: When using the Math-syntax please also care for a proper layout. Please edit the explanation below and only mention here why it isn't complete. Do NOT delete this tag too soon. If you can address this issue, please edit the page! Thanks. |

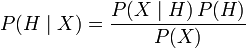

Bayes' theorem is:

,

where

,

where

is the probability that

is the probability that  , the hypothesis, is true given observation

, the hypothesis, is true given observation  . This is called the posterior probability.

. This is called the posterior probability. is the probability that observation

is the probability that observation  will appear given the truth of hypothesis

will appear given the truth of hypothesis  . This term is often called the likelihood.

. This term is often called the likelihood. is the probability that hypothesis

is the probability that hypothesis  is true before any observations. This is called the prior, or belief.

is true before any observations. This is called the prior, or belief. is the probability of the observation

is the probability of the observation  regardless of any hypothesis might have produced it. This term is called the marginal likelihood.

regardless of any hypothesis might have produced it. This term is called the marginal likelihood.

The purpose of Bayesian inference is to discover something we want to know (how likely is it that our explanation is correct given the evidence we've seen) by mathematically expressing it in terms of things we can find out: how likely are our observations, how likely is our hypothesis a priori, and how likely are we to see the observations we've seen assuming our hypothesis is true. A Bayesian learning system will iterate over available observations, each time using the likelihood of new observations to update its priors (beliefs) with the hope that, after seeing enough data points, the prior and posterior will converge to a single model.

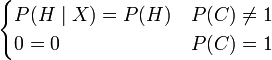

If  the modified theorem reverts to the original Bayes' theorem (which makes sense, as a probability one would mean certainty that you are using Bayes' theorem correctly).

the modified theorem reverts to the original Bayes' theorem (which makes sense, as a probability one would mean certainty that you are using Bayes' theorem correctly).

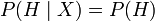

If  the modified theorem becomes

the modified theorem becomes  , which says that the belief in your hypothesis is not affected by the result of the observation (which makes sense because you're certain you're misapplying the theorem so the outcome of the calculation shouldn't affect your belief.)

, which says that the belief in your hypothesis is not affected by the result of the observation (which makes sense because you're certain you're misapplying the theorem so the outcome of the calculation shouldn't affect your belief.)

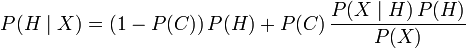

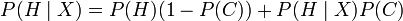

This happens because the modified theorem can be rewritten as:  . This is the linear-interpolated weighted average of the belief you had before the calculation and the belief you would have if you applied the theorem correctly. This goes smoothly from not believing your calculation at all (keeping the same belief as before) if

. This is the linear-interpolated weighted average of the belief you had before the calculation and the belief you would have if you applied the theorem correctly. This goes smoothly from not believing your calculation at all (keeping the same belief as before) if  to changing your belief exactly as Bayes' theorem suggests if

to changing your belief exactly as Bayes' theorem suggests if  . (Note that

. (Note that  is the probability that you are using the theorem incorrectly.)

is the probability that you are using the theorem incorrectly.)

The title text suggests that an additional term should be added for the probability that the Modified Bayes Theorem is correct. But that's this equation, so it would make the formula self-referential, unless we call the result the Modified Modified Bayes Theorem (or Modified2). It could also result in an infinite regress -- we'd need another term for the probability that the version with the probability added is correct, and another term for that version, and so on. If the modifications have a limit, then we can make that the Modifiedω Bayes Theorem, but then we need another term for whether we did that correctly, leading to the Modifiedω+1 Bayes Theorem, and so on through every ordinal number. It's also unclear what the point of using an equation we're not sure of is (although sometimes we can: Newton's Laws are not as correct as Einstein's Theory of Relativity but they're a reasonable approximation in most circumstances. Alternatively, ask any student taking a difficult exam with a formula sheet.).

If we denote the probability that the Modifiedn Bayes' Theorem is correct by  , then one way to define this sequence of modified Bayes' theorems is by the rule

, then one way to define this sequence of modified Bayes' theorems is by the rule

One can then show by induction that

Transcript

| |

This transcript is incomplete. Please help editing it! Thanks. |

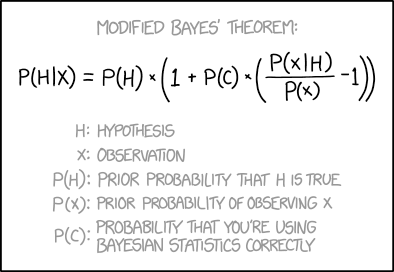

- Modified Bayes' theorem:

- P(H|X) = P(H) × (1 + P(C) × ( P(X|H)/P(X) - 1 ))

- H: Hypothesis

- X: Observation

- P(H): Prior probability that H is true

- P(X): Prior probability of observing X

- P(C): Probability that you're using Bayesian statistics correctly

Discussion

Right now the layout is awful:

- "If

the..."

the..." - should look like this:

- "If P(C)=1 the..."

But there is more wrong right now. Look at a typical Wikipedia article, the Math-extension should be used for formulas but not in the floating text. --Dgbrt (talk) 20:03, 15 October 2018 (UTC)

- Credit for a good explanation though. It made perfect sense to me, even though I didn't understand it. 162.158.167.42 04:14, 16 October 2018 (UTC)

I removed this, because it makes no sense:

- As an equation, the rewritten form makes no sense.

is strangely self-referential and reduces to the piecewise equation

is strangely self-referential and reduces to the piecewise equation  . However, the Modified Bayes Theorem includes an extra variable not listed in the conditioning, so a person with an AI background might understand that Randal was trying to write an expression for updating

. However, the Modified Bayes Theorem includes an extra variable not listed in the conditioning, so a person with an AI background might understand that Randal was trying to write an expression for updating  with knowledge of

with knowledge of  i.e.

i.e.  , the belief in the hypothesis given the observation

, the belief in the hypothesis given the observation  and the confidence that you were applying Bayes' theorem correctly

and the confidence that you were applying Bayes' theorem correctly  , for which the expression

, for which the expression  makes some intuitive sense.

makes some intuitive sense.

Between removing it and posting here, I think that I've figured out what it's saying. But it comes down to criticizing a mistake made in an earlier edit by the same editor, so I'll just fix that mistake instead.

—TobyBartels (talk) 13:03, 16 October 2018 (UTC)

What about examples of correct and incorrect use of Bayes' Theorem? I don't feel equal to executing that, but DNA evidence in a criminal case could be illuminating. As a sketch, it may show that of 7 billion people alive today, the blood at the scene came from any one of just 10,000 people of which the accused is one. Interesting, but not absolute. At least 9,999 of the 10,000 are innocent. Probability of mistake or malfeasance by the testing laboratory also needs to be considered. Then there's sports drug testing, disease screening with imperfect tests and rare true positives, etc. [email protected] 141.101.76.82 09:42, 17 October 2018 (UTC)

Where is the next comic button for this page? IYN (talk) 15:46, 17 October 2018 (UTC)