1718: Backups

| Backups |

Title text: Maybe you should keep FEWER backups; it sounds like throwing away everything you've done and starting from scratch might not be the worst idea. |

Explanation

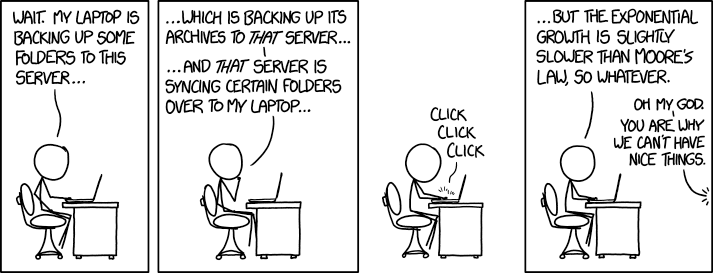

On his laptop, Cueball explores a cyclic path along which his files are being copied from storage to storage. His laptop (presumably the one he is on) is sending its files to a server, which sends its files to another server, which in turn syncs back a certain selection of files to his laptop. Cueball determines that this setup leads to an exponential growth, implying that each node in the cycle simply copies files over to the next without any effort to avoid duplicates. Indeed, each time a set of files completes a full cycle, duplicates of the same files are propagated.

Moore's Law is an observation in computer engineering (made by engineer Gordon Moore in 1965) that states that the number of transistors we can fit in a chip will double approximately every two years. Cueball, who was rather alarmed, calms down when he realizes that the exponential growth of his backup is slower than that of Moore's Law. He reasons that as long as he keeps at the forefront of information storage, he will never run out of room. Assuming available disk capacity is proportional to number of transistors (this is roughly true for solid-state disks) or otherwise keeps pace with Moore's Law, this would imply it takes more than two years for his files to completely propagate through two servers and back to his laptop enough times to double in size (implying either an extremely slow transfer or an extremely weird backup system).

The phrase "[this is] why we can't have nice things" is often used in response to incidents where someone abuses a well-meaning feature, with the abuse ultimately wiping out any benefits the feature was supposed to bring. In the comic, the person off-screen is commenting on the fact that Cueball is not using advances in storage capacity in a responsible manner. That is, rather than using the increased capacity to store more useful information, he is simply using it as a workaround to avoid having to make his backup strategy more efficient.

This concept is further expanded upon in the title text when somebody, presumably the off-screen speaker, notes that Cueball may be better off taking fewer backups in the hopes of losing some data. Typically backups are taken in the hopes of not losing programs and data. However, if the inefficient backup solution presented is representative of the other things Cueball has created, it may be better to have it all be lost and in effect force it to be re-created in a hopefully superior way.

There are some similarities to the Cueball who owns the computer in the 1700: New Bug and maybe also to the Code Quality series: 1513: Code Quality and 1695: Code Quality 2, where Cueball speaks with Ponytail.

Poor backup strategies are referenced in 1360: Old Files.

Transcript

- [Cueball] is sitting in an office chair at his desk, working on his laptop.]

- Cueball: Wait. My laptop is backing up some folders to this server...

- [Cueball scratches his chin in thought.]

- Cueball: ...Which is backing up its archives to that server...

- Cueball: ...And that server is syncing certain folders over to my laptop...

- [In a frame-less panel Cueball clicks on his laptop keyboard.]

- Click click click

- [Cueball is back to working normally on his laptop. A voice speaks to him from off-panel as indicated with a starburst at the right frame.]

- Cueball: ...But the exponential growth is slightly slower than Moore's law, so whatever.

- Off-panel voice: Oh my God.

- Off-panel voice: You are why we can't have nice things.

Discussion

I think this makes more sense if only a small portion of all files from the laptop complete the ENTIRE loop. if the total percentage of files which complete the entire loop is 0.0004% , and he backups once a month, that should give him exponential growth slightly smaller than Moore's Law. At 18 months, his total file size would be about 168% of the original. 172.68.58.245 22:03, 10 August 2016 (UTC)

- "Cueball: Wait. My laptop is backing up some folders to this server..." Because of that I agree with you. It's saying "Some" folders are being backed up. The wording heavily implies it's not everything in the computer being backed up just a part. 141.101.98.61

- Even if all the files do make the round trip they might use good deduplication. If all the files round trip but only the changes and a few kilobytes of metadata per file are duplicated then the growth can be exponential. This is only true if none of the backups are compressed or encrypted, though. 108.162.219.232 (talk) (please sign your comments with ~~~~)

Also, the title text my refer to that often when you lose a project and have to start over from scratch, the project become so much better. 162.158.133.102 01:55, 11 August 2016 (UTC)

This happens. It can really surprise you when the exponential curve is flat enough. We had a case where we kept a log of the backups on a server that was backed up. This went fine for years, until at some point when we ran out of backup space we found that backups of the logs of backups consumed over 99% of our diskspace.162.158.87.11 10:04, 11 August 2016 (UTC)

- Tee hee! This is why the first thing I exclude from backup is the log directory, or the whole /var tree (with a few selected exceptions, like /var/spool/cron/crontabs - this is a royally misplaced location, it should go under /etc). The logs that need to be kept are sent to a log server, online, by the logger daemon itself. If there's no log server (small systems) at least send the logs to backup place during log rotation. -- 162.158.203.151 18:59, 11 August 2016 (UTC)

- I once managed to backup / to the backup disk at /media/Backup Disk. D'oh. Backupception. --162.158.150.228 12:17, 11 August 2016 (UTC)

I think there should be an explanation, why this setup leads to exponential growth. IMO, it is linear or polynomial of degree 2 at most. Let's assume, the notebook does only contain one file: /A.txt. After one backup-cycle there are two files: /A.txt and /backups/A.txt. After the next one, there are three: /A.txt, /backups/A.txt and /backups/backups/A.txt. Thus the amount of files does only grow in a linear way. Only the path-information is growing faster: The amount of additional directories in the file's path is growing with the square of the amount cycles (it's the sum of all integers from 1 to the cycle-count). Can anybody explain the exponential growth? Epaminaidos (talk) 06:44, 12 August 2016 (UTC)

- The number of files grows exponentially, if not a certain amount of data but a percentage of the data is backed up in each cycle. --162.158.83.228 07:31, 12 August 2016 (UTC)

- Can you elaborate this? I don't get it. Epaminaidos (talk) 09:50, 12 August 2016 (UTC)

- I guess most backup systems keep older backups. First, there's /A.txt. Next, there's /A.txt and /backup/2016-08-12/A.txt. Third, there's /A.txt, /backup/2016-08-12/A.txt, /backup/2016-08-13/A.txt and /backup/2016-08-13/backup/2016-08-12/A.txt. --SlashMe (talk) 09:38, 12 August 2016 (UTC)

- Cueball is talking about "syncing folders", not about a backup-system that keeps old versions. Epaminaidos (talk) 09:50, 12 August 2016 (UTC)

- ????? The first two panels say they are creating back-ups. 108.162.210.196 12:35, 12 August 2016 (UTC)

- Actually, there are two backup systems and one sync involved. --SlashMe (talk) 13:17, 12 August 2016 (UTC)

- Cueball is talking about "syncing folders", not about a backup-system that keeps old versions. Epaminaidos (talk) 09:50, 12 August 2016 (UTC)