2697: Y2K and 2038

| Y2K and 2038 |

Title text: It's taken me 20 years, but I've finally finished rebuilding all my software to use 33-bit signed ints. |

Explanation[edit]

The Y2K bug, or more formally, the year 2000 problem, was the computer errors caused by two digit software representations of calendar years incorrectly handling the year 2000, such as by treating it as 1900 or 19100. The year 2038 problem is a similar issue with timestamps in Unix time format, which will overflow their signed 32-bit binary representation on January 19, 2038.

While initial estimates were that the Y2K problem would require about half a trillion dollars to address, there was widespread recognition of its potential severity several years in advance. Concerted efforts among organizations including computer and software manufacturers and their corporate and government users reflected unprecedented cooperation, testing, and enhancement of affected systems costing substantially less than the early estimates. On New Year's Day 2000, few major errors actually occurred. Those that did usually did not disrupt essential processes or cause serious problems, and the few of them that did were usually addressed in days to weeks. The software code reviews involved allowed correcting other errors and providing various enhancements which often made up at least in part for the cost of correcting the date bug.

It is unclear whether the 2038 problem will be addressed as effectively in time, but documented experience with the Y2K bug and increased software modularity and access to source code has allowed many otherwise vulnerable systems to already upgrade to wider timestamp and date formats, so there is reason to believe that it may be even less consequential and expensive. The 2038 problem has been previously mentioned in 607: 2038 and 887: Future Timeline.

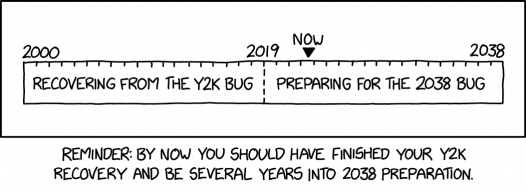

This comic assumes that the 38 years between Y2K and Y2038 should be split evenly between recovering from Y2K and preparing for Y2038. That would put the split point in 2019. The caption points out that it's now, in 2022, well past that demarcation line, so everyone should have completed their "Y2K recovery" and begun preparing for year 2038. It is highly unlikely that there are more than a very few consequential older systems that still suffer from the Y2K bug, as systems built to operate this millennium handle years after 1999 correctly. The topic of whether or not Y2K was actually as big of a problem as it was made out to be remains hotly debated. The main arguments falling into the general camps of "nothing bad happened, Y2K would have overwhelmingly been an inconvenience rather than a problem" vs. "very little happened only because of the massive effort put into prevention". It is unlikely that there will ever be a conclusive answer to the question, with the truth probably being somewhere in between those two extremes. Whatever the answer to that question may be, the reaction to Y2K did result in a significant push towards, and raise in public awareness of, clean and futureproofed code.

The title text refers to replacing the 32-bit signed Unix time format with a hypothetical new 33-bit signed integer time and date format, which is very unlikely as almost all contemporary computer data structure formats are allocated no more finely than in 8-bit bytes. Doing this may seem complicated to new software developers, but recompiling with a larger size of integers was a normal solution for the Y2K bug among engineers of Randall's generation, who learned to code when computer memory space was still at a premium. For example, in most implementations of the C language, the data type that represents 32-bit integers is called "long", while a 16-bit integer is a "short". C actually does allow programmers to declare a 33-bit integer ("long long:33"), but they're really just treated as 64-bit numbers that get truncated when stored, and would be slow unless running on custom hardware that supports such a type natively (which no hardware currently does). Taking 20 years to develop and implement such a format is not entirely counterproductive, as it would add another 68 years of capability, but it is a far less efficient use of resources than upgrading to the widely available and supported 64-bit Unix time replacement format and software compatibility libraries.

Transcript[edit]

[A timeline rectangle with 37 short dividing lines between the two ends, defining it into 38 minor sections, with the label "2000" above, associated with the leftmost edge, "2038" associated with the rightmost edge and "2019" directly over the centermost division that starts the section which covers that year, which is also extended to form a dotted line divided the whole height of the timeline into two equal 19-section halves. The left half has the label "Recovering from the Y2K bug" and the right half is labeled "Preparing for the 2038 bug". A triangular arrowhead labeled "Now" is also above indicating a rough position most of the way through the section that would represent the year 2022.]

[Caption:] Reminder: By now you should have finished your Y2K recovery and be several years into 2038 preparation.

Discussion

Y2K issues solved back in 1996. Even wrote a letter to the Board of Trustees. 2038 Problems are not-my-concern. Retired 9/30/2022.172.70.110.236

- Many of the people who helped solve the Y2K problem were pulled out of retirement. Lots of the issues were in old COBOL software, and there weren't enough active programmers who were competent in COBOL. So keep your resume ready. Barmar (talk) 20:07, 11 November 2022 (UTC)

this is so weird I just finished a research assignment on the Y2038 problem 172.71.166.223 18:27, 11 November 2022 (UTC)

Somewhere there is an essay about the unexpected synergy between the Y2K bug and the burgeoning open source movement, which may or may not be useful for the explanation. 172.70.214.243 20:18, 11 November 2022 (UTC)

- I'm sure Dave in Nebraska has updated his app https://xkcd.com/2347/ 172.70.91.57 17:18, 14 November 2022 (UTC)

- https://www.livehistoryindia.com/story/eras/india-software-revolution-rooted-in-y2k is a fascinating essay too. 172.70.214.151 21:03, 11 November 2022 (UTC)

- I wouldn't be surprised if there's such an essay, but I suspect it's more of a coincidence. The late 90's was also when the Internet was really taking off, and that may be more of a contributor. Barmar (talk) 23:04, 11 November 2022 (UTC)

- All involved what epidemiologists call coordinated or mutually reinforcing causes, IMHO. 172.71.158.231 01:41, 12 November 2022 (UTC)

Speaking of which, what comes after Generation Z? Generation AA? ZA? Z.1? Help! 172.70.214.243 07:24, 12 November 2022 (UTC)

- Generation Alpha 172.69.34.53 07:27, 12 November 2022 (UTC)

- Zuckerbergs Army. --Lupo (talk) 15:18, 12 November 2022 (UTC)

- The Legion of the Doomed 172.70.162.56 10:20, 14 November 2022 (UTC)

- Zuckerbergs Army. --Lupo (talk) 15:18, 12 November 2022 (UTC)

- Generation 😊 Kimmerin (talk) 08:29, 17 November 2022 (UTC)

I've been unable to confirm this so I'm moving it here: A major problem had struck IBM mainframes on and after August 16, 1972 (9999 days before January 1, 2000) that caused magnetic tapes that were supposed to be marked "keep forever" instead be marked "may be recycled now."[actual citation needed] 172.71.158.231 07:37, 12 November 2022 (UTC)

- I have heard that y2k problems showing up in 1970 in calculations for thirty-year mortgages. Philhower (talk) 14:12, 14 November 2022 (UTC)

Does the arrow move over time? ... should it? (I think so!) It could be done server side and only regulars would [see, sic] that it changes over time. Then... perhaps we could see different versions of the strip cached on the Internet. --172.71.166.158 08:30, 12 November 2022 (UTC) It isn't, of course, but if it was a .GIF with ultralong replace-cycles then only those who kept the image active would see the arrow move in real-time. (It would reset to now's "now" upon each (re)loading, so it would have an even more exclusive audience, aside from those that cheat with image(-layer) editing. ;) ) 172.70.162.57 13:32, 12 November 2022 (UTC)

Should we mention anything about that it is that specific year in a specific calendar? As far as I know there was also fear of a similiar bug in Japan recently. However Wikipedia seems not to be up to date about it. --Lupo (talk) 15:18, 12 November 2022 (UTC)

Does anyone know of an actual program or OS that stored the year as two characters instead of a single byte? I have (and had back then) serious doubts that any problems existed. Even the reported government computers had people born prior to 1900 entered, so they already had to have better precision than "just tack on 1900." Even using a single signed byte would still have been good for another 5 years from now. SDSpivey (talk) 17:22, 12 November 2022 (UTC)

- In my experience (I lived and worked through the Y2K preparations) it wasn't so much "an actual program", or necessarily a fundemental limitation of an entire OS (though the roots of the problem effectively date back to key decisions surrounding the developmet of the IBM System/360 in the 1960s), but a matter of how data was held in human-readable but space-saving format. Someone in the '70s (or even up into into the '90s) may have decided their system could store some date as the six characters representing DDMMYY (or ay of the other orders) secure in the knowledge that the century digits were superfluus - and would have perhaps sent the footprint of a standard record over some handy packable length for the system, say 128 bytes. Which was a lot in those days.

- (If the year value had been recorded in 16bit binary, or even 2x7bit or doubled 6-bit, it could have been as good for the computer, but oh the fuss to convert to and from a human-orientated perspective. And it worked neatly enough, right?)

- And a useful implementaion might be used, in some form or other for a long time... Sometimes the storage system is upgraded (kilobytes? ha, we have megabytes of space now!) and the software to handle it might be ported and even rewritten, but at each stage the extra data has to match the old program, and the new program has to read and write the current data, however kludged it actually is. And it works, at least under the care of those who dabble in the dark arts of its operation. And not many others are bothered or even have any idea of what ;ies beneath the surface.

- Until somebody starts to audit the issue and asks everyone to poke around and check things... Thenthings get sorted in-situ or a much needed (YMV!) change of process is swapped in, in the place of old and (possibly) incorrect hacks. 172.69.79.133 20:00, 12 November 2022 (UTC)

- Sometimes the "savings" of storing data in a compact form are exceeded by the "cost" of having to convert it between the convenient-to-use form and the compact form. I used to work on a system that used 32-bit words for all data types: characters, shorts, longs. When we started running out of space, we "manually" packed our data, stuffing multiple shorts and bytes into words. But in some cases, the additional code needed to pack/unpack would have taken more space than what we'd have saved in the data, without even looking at the processing time cost. BunsenH (talk) 05:52, 13 November 2022 (UTC)

- Not sure about storing each digit as a *character*, but IBM mainframes have supported packed decimal formats where each decimal digit was stored in a 4-bit nibble. That format can give more intuitive results from decimal fraction arithmetic for applications such as currency. But, I've heard of the same format being used for integer applications such as page numbers, etc because it was familiar and readable on hex dumps. Philhower (talk) 14:12, 14 November 2022 (UTC)

- Binary-coded decimal... Loads of interesting uses (including precision decimal fractions), but of course largely fallen out of favour for various technical and logistial reasons. 172.70.86.61 14:44, 14 November 2022 (UTC)

- The first computerised passport system for the UK had a y2K issue. In fact, it was designed in, because it was supposed to be replaced before 1999. Unfortunately, progress with its replacement was running late. We thought that we could get away with two digits for certain dates because the software was going to be thrown away before the end of 1999. And yes, two digit years were common in COBOL programs because decimal numbers coded using ASCII or EBCDIC were the default for numeric data. Jeremyp (talk) 15:32, 13 November 2022 (UTC)

- Numbers stored as characters is a basic data type on IBM mainframes (look up Zoned Decimal and Packed Decimal). The processor itself has instructions to compute directly in those formats. It has the advantage to save a lot of processing time, as there is basically no conversion from user input nor for display. Also avoid precision errors that floating point formats have. And way easier to read on memory dumps. The waste of space (using a whole byte to store a single digit) is not much of an issue considering even early computers could save kilometres of data on tapes. Having the programs run fast was more important. Shirluban 172.71.126.41 17:17, 15 November 2022 (UTC)

- 1. Having done programming since 1966, I know that much data was stored on 80-character cards (and way before that year and the IBM System/360) and using 2 characters (2.5% of the card) to store the "19" was not acceptable. As processes moved into the tape and disk world, human nature tended to not expand the field to 4 characters (the future is a long way off until, suddenly, it isn't). 172.70.178.65 07:57, 13 November 2022 (UTC)

- 2. I actually saw a Y2K failure. It occurred at the beginning of 1999 when a job scheduling program scheduled a job for the year 1900 because it was always keeping the schedule active a year in advance. The scheduling software had actually been fixed but the upgraded version had not been installed yet, so there was no significant outage. 172.70.178.64 08:02, 13 November 2022 (UTC)

"an actual program or OS that stored the year as two characters" In years 2000-2002, it was common to see dates on web-pages showing as "19100". I/we always assumed the 19 was hard-coded, the 1-99 was a script, just concatenated. PRR 172.70.130.154 06:52, 14 November 2022 (UTC)

"I've never heard of anyone actually recompiling to a 33-bit integer format" - that's not what was said, but it seems to be about programming so as to pack 33-bits of precision across (or within) whatever standard bit-boundaries the system normally provides for. Which is not so fanciful, and used to be a good creative coding practice, if done well. See 8x7bit to/from 8x7bit packing or unpacking (or as an in-transit stage), which was a regular requirement at one time (arguably still is, but mostly invisibly to the user, in the usually 6-bit rationalisation that is MIME). But the edit above doesn't preclude that interpretation, so just noting this here. 141.101.107.157 13:43, 14 November 2022 (UTC)

"systems built to operate this millennium handle years after 1999 correctly" Not necessarily. At least one UK bank (the one where I worked on Y2K) used "sliding century windows", where they pick a number X in the range 0 to 9, and any year where the YY of CCYY is greater than 10X is considered to be `this century', else last century, and in a few decades X can be moved along a bit. Not our team's decision, and by the time we heard about it it was far too late to complain about what a bloody stupid idea it was. The problem wasn't fixed *properly*; it was fixed *cheaply*. 141.101.99.198 08:45, 2 November 2024 (UTC)