2494: Flawed Data

| Flawed Data |

Title text: We trained it to produce data that looked convincing, and we have to admit, the results look convincing! |

Explanation[edit]

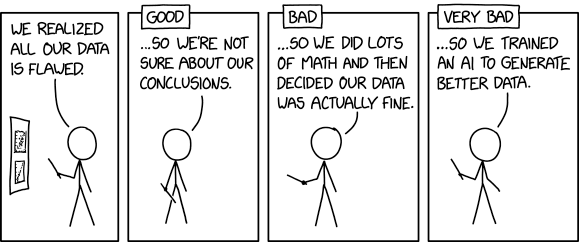

This is another comic about what is the right or wrong way to perform research when the data are not adequate.

In the first frame, Cueball presents a report on a poster (two graphs with data points and possible fitted curves), admitting that all of the data are actually flawed. He doesn't explain if it's contrary to some outcome or revelation, or perhaps a systematic error in the data-gathering process.

From there, three different reactions to this are displayed in order of how good a decision they make based on this realization.

- Good

In the first scenario, Cueball states they are no longer sure about the conclusions they had drawn from the flawed data. This is, of course, the scientifically appropriate decision. The less reliable the data are, the less reliable the conclusions that can be drawn. Ideally, flawed data would be discarded altogether, but there are situations in which better data are not available, so a compromise may be to draw tentative conclusions, but make clear that those are uncertain, due to issues with the data.

- Bad

In the second scenario, Cueball then explains that after heavy manipulation ("doing a lot of math") of their flawed data, they decided they were actually fine. There are a number of methods that can be used to manipulate or "clean" data, with varying levels of complexity and reliability. Some of these methods may be valid in certain situations, but applying them after the initial analysis failed is highly suspect. The likelihood, in such a case, is that the researchers tried different methods of data manipulation, one after another, until they found one that gave the results they wanted. This is clearly highly subject to the biases of the researchers (both conscious and unconscious) and is much less likely to result in accurate conclusions. Hence, this approach occurs in research more often than it should, and Randall is making clear that it's "bad".

- Very bad

In the third and final scenario, Cueball explains that they scrapped all the flawed data. However, instead of trying to make some new data by correctly redoing research/measurements/tests, they instead trained an Artificial Intelligence (AI) to generate better data from nothing but a desire to match a target outcome. These are, of course, not real data,[citation needed], but just a simulation of data, selectively sieving statistical noise for desirable qualities. And since they are probably looking for a specific result, they are training the AI to generate data that supports this. This approach is "very bad", as it not only produces no useful science, but means that future researchers will be working from entirely artificial data. Doing so would be destructive to science and would be considered incredibly unethical in any research body or association. The only purpose of such a method would be to convince others that you'd proven something interesting, rather than determining what's true (and possibly gain some experience in AI programming). AI is a recurring theme on xkcd.

In the title text, the results from the very bad approach are mentioned, and the fact that they got the data they were looking for is made clear when they state that We trained it to produce data that looked convincing, and we have to admit, the results look convincing! The AI was, of course, trained to provide data that looks convincing, which is why they are so convinced of the results.

Transcript[edit]

- [Cueball is pointing a stick at a poster hanging behind him while addressing an unseen audience. There are two graphs on the poster with data points and fitting curves.]

- Cueball: We realized all our data is flawed.

- [The next three panels all have a label in a frame going over the top of each panel's frame. The poster can no longer be seen in the rest of the panels.]

- Label: Good

- [Cueball has taken the stick down.]

- Cueball: ...So we're not sure about our conclusions.

- Label: Bad

- [Cueball holds the pointer almost as in the first panel.]

- Cueball: ...So we did lots of math and then decided our data is actually fine.

- Label: Very bad

- [Cueball holds the pointer so it points upwards. Also, he lifts his other hand a bit up.]

- Cueball: ...So we trained an AI to generate better data.

Discussion

For the first time in a very long time I was the first to make an attempt at the main explanation. I guess this comic came out very late then? Or just late up on explain xkcd? Seems like the Monday comic first came up on Tuesday in many countries including those in Europe. But guess it was still Monday in the US, at least in the western parts? I hope this is not as bad an attempt as Cueball's research strategies in the last panel :-) --Kynde (talk) 07:05, 27 July 2021 (UTC)

- Isn't it related to a recently published article[1][2] about bias introduced into AI by humanly-biased data?

- Reports of bias in AI have been in the news for several years. Most notably, facial-recognition systems that are bad at distinguishing faces of black and brown people. Barmar (talk) 13:58, 27 July 2021 (UTC)

- "On two occasions I have been asked [by members of Parliament], 'Pray, Mr. Babbage, if you put into the machine wrong figures, will the right answers come out?' I am not able rightly to apprehend the kind of confusion of ideas that could provoke such a question." - Charles Babbage ; note that century and half passed since that quote and people STILL somehow expect computer will be able to reach correct results based on wrong data. -- Hkmaly (talk) 17:23, 27 July 2021 (UTC)

- Reports of bias in AI have been in the news for several years. Most notably, facial-recognition systems that are bad at distinguishing faces of black and brown people. Barmar (talk) 13:58, 27 July 2021 (UTC)

This explanation has some good ideas for what these things mean, but it goes into them in excessive detail, which doesn't leave a lot of room for other ideas to be included side-by-side. I think that might be common. I was just thinking that there are a lot of ways extra math is used to produce worse conclusions: as soon as you have to work more to find what is good, occam's razor says you are less likely to be relevant. Similarly there are a lot of ways that AI is used to work with data, but its power greatly surpasses its ability to reflect the underlying meaning of things. For example, the existing data can be extended without being scrapped, and look completely real in every known respect, but that doesn't mean that any new information is included in what is generated, since the only data to work with is what was already there. 108.162.219.98 16:19, 27 July 2021 (UTC)

There are various techniques in Machine Learning to augment the training data, which can include generating fake data that looks like the real data; one such technique is using Generative adversarial network (GAN).

On first reading, I was thinking the Good approach would be to go out and run new experiments and measurements, incorporating the lessons from their flawed data, to avoid making the same mistakes again. This can be quite expensive, but it is really the only way to increase the validity of the data. Just saying "We can't trust our conclusions," throws away the opportunity to learn from earlier mistakes and come up with better measurements next time. Nutster (talk) 14:38, 28 July 2021 (UTC)

Is this a reference to Biogen? Doing some motivated post hoc subgroup analysis to get Aduhelm approved.

From what I know, the "very bad" approach is becoming common in data science, see the Wikipedia page for Synthetic_data#Synthetic_data_in_machine_learning or, when done on a single feature at a time, Imputation_(statistics). The reason imputation can be problematic because the data is missing due to some confounding variable, so trying to fill in based on existing values will bias the results. A slightly related example is for class imbalance, where some groups are underrepresented and therefore won't be predicted as accurately as overrepresented groups. Instead of gathering more data, especially more representative data, data scientists will often use something like SMOTE to generate more data. An example of a widely used but frankly bad synthetic dataset is kddcup99. 172.68.142.147 05:25, 11 August 2021 (UTC)

Super Bad: So we used a RNG to make completely random data

Ultra Bad: So I just picked my favorite numbers to use as data